Multiscale Structure Guided Diffusion for Image Deblurring

Super Short Description

- Paper Link

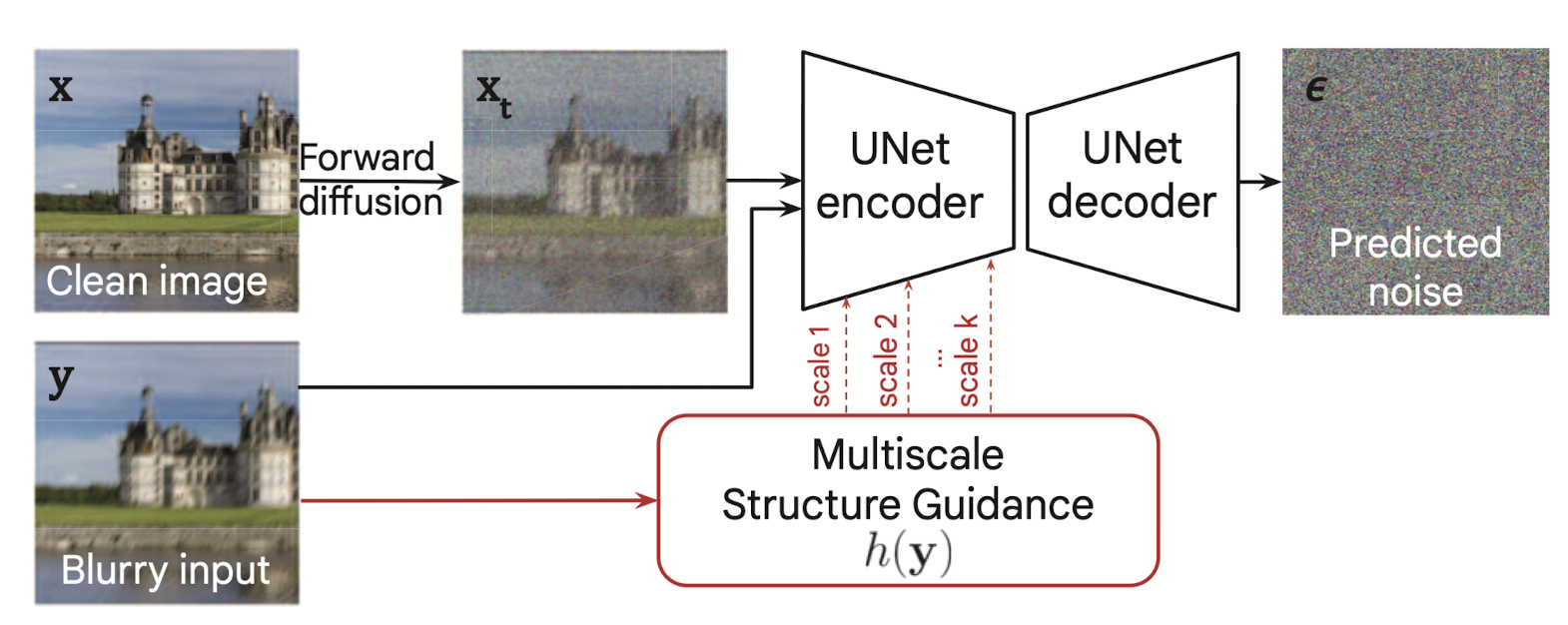

- This paper tackles deblurring task and works with Conditional diffusion model based setup where the conditional input is the blurry image. The primary focus is on out-of-distribution performance. The technical novelty lies in how the conditional input is mixed with the intermediate noisy \(x_t\).

Learned Structural Guidance

Motivation

In order to improve the out-of-distribution performance, the authors ensure two things. Firstly, more and more coarser scale information is encouraged to be used. This should help since it is more likely that even in test images, which are out-of-distribution, the coarse scale information is still similar to what was seen in training data. Secondly, the relevant information present in the conditional input has an ‘optimal’ representation before merging with \(x_t\).

Implementation details

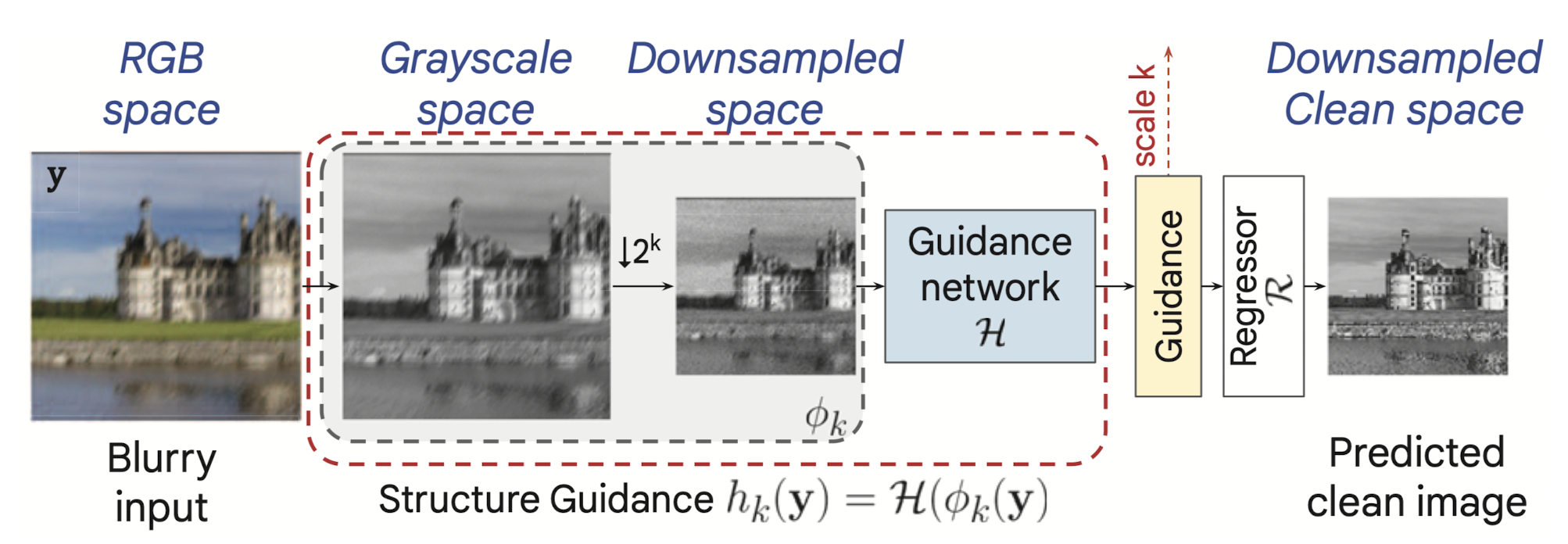

The conditional input, which is a blurry image, is downsampled multiple times. For each downsampled version, a guidance network is trained in a supervised way to predict the clean image version of the blurry image at the corresponding resolution. This ensures that the network learns to extract the most relevant information from the blurry image.